-

Poles Have Their Own LLM

If you ask people what AI models they know, you can expect to hear models offered by the largest providers such as ChatGPT, Gemini, or Claude. In this post, I describe the two most popular Polish LLMs: PLLuM and Bielik. I explain what sets them apart and how you can use them.

-

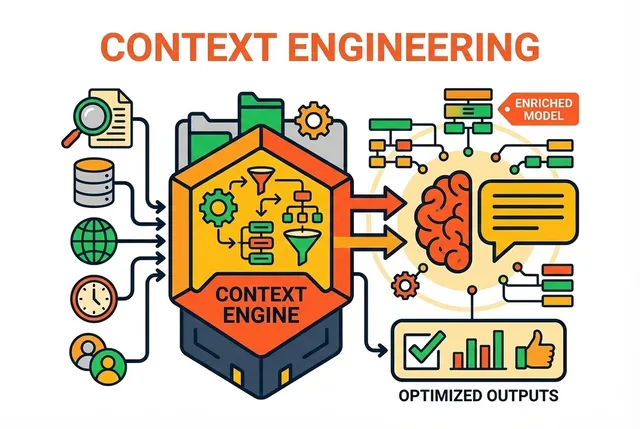

Context Engineering: The Art of Managing AI's Attention

Effective work with AI is not just about good questions, but above all about proper information management. Learn what context engineering is, why it is crucial for the precision of answers, and how it differs from the popular RAG system. Discover techniques that will make your digital assistant stop wandering and start delivering concrete solutions.

-

Fine-tuning: Specialized AI For Your Needs

How to transform universal AI models into specialized experts tailored to your unique needs? Fine-tuning is a process that allows you to "train" artificial intelligence in a specific field or communication style, creating solutions perfectly adapted to your business or project.

-

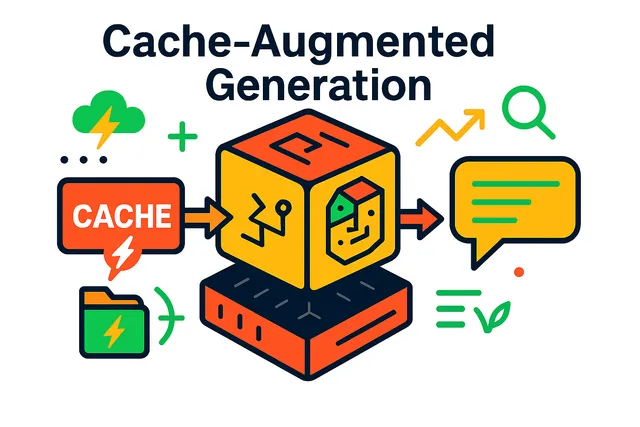

Cache-Augmented Generation (CAG): Speeding Up AI

A technique that increases the speed of AI models. Learn how this method enables lightning-fast responses and increases the efficiency of AI systems, especially in applications requiring instant reaction.

-

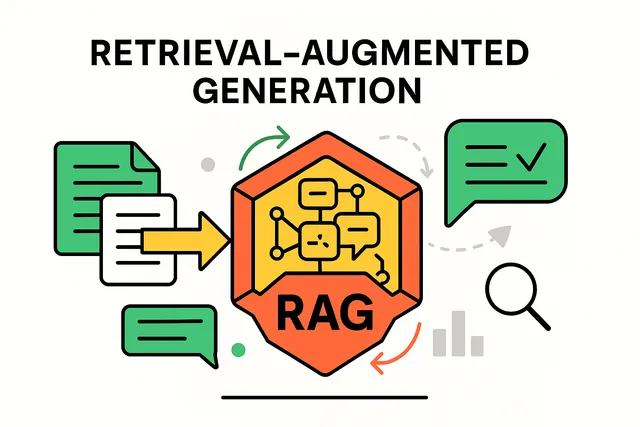

Retrieval-Augmented Generation (RAG): Connecting AI with External Knowledge

A technology that combines AI with external information sources. RAG allows artificial intelligence models to use current data and specialized knowledge, providing more accurate and reliable answers. It's like a conversation with an expert who checks facts on the fly in trusted sources.

-

Prompt Engineering: The Art of Asking Questions to Artificial Intelligence

Or how to effectively communicate with AI through properly formulated commands. Prompt engineering is the simplest way to get better results from artificial intelligence, available to everyone without technical knowledge. Learn how small changes in the way you ask questions can make a huge difference in answer quality.